Posts Tagged ‘Unity’

Unity: Using NGUI with the Oculus Rift

Many Unity developers are using Tasharen Entertainment’s NGUI as a UI solution, and quite sensibly in my opinion; it’s much better than the built-in GUI framework in many ways and I don’t think there’s anything better on the asset store either. I recently got it working with the dual-camera rendering of the Rift; here’s the method I used.

Basic Setup

NGUI uses its own camera to render everything on the UI layer(s) you specify. That’s a pretty convenient approach for Rift development as it turns out, thanks to a built-in Unity feature. We’ll be rendering to a texture and then displaying that rather than allowing the UI to render directly to the screen. If you’ve snooped around the Tuscany demo scene a bit, you might recognize this as the same approach the supplied OVRMainMenu takes.

Create a render texture in your project; you’ll probably want it to be 1024×1024 or 2048×2048 for UI use. Point the NGUI camera’s “Target texture” field at your render texture. You’ll need to create a new material that uses the render texture as a source, so do that – set the shader as “Unlit/Texture” right now. We’ll be coming back to shaders in a moment.

Next, create a plane (if you’re reading this IN THE FUTURE, Unity are supposed to be implementing a two-triangle optimized plane object; if that’s available, use it instead of the standard 400-poly beast we have now). Parent it to the CameraRight camera object inside the OVRCameraController. Note that this will give you a UI which is locked to the player’s view, no matter where they are looking; if you want a non view-locked UI that the user can look around, you might want to attach the plane object to the player object instead of directly to the camera. Position the plane in front of your player object, and orient it so that the top surface is facing the player. The position you choose is relevant, by the way; the Z distance from the player will affect the size of the UI onscreen.

Now set the material on the plane to be the render texture material you created before. If you have widgets set up in your UI, you should see them render in the scene view at this point, though the background of the UI will be a solid colour. Let’s fix that!

Shaders

We need to make a new shader to have things render correctly when using a render texture. We want the NGUI widgets to be rendered with an alpha channel. If you’re not used to shader programming, don’t worry! This is a pretty simple thing to pull off.

NGUI holds its shaders in the folder “Assets/NGUI/Resources/Shaders”. Open this path in Windows Explorer (or Finder or whatever other strange OS you might be using!). Find the “Unlit – Transparent Colored” shader and copy it. We’ll keep the original shader so we can reuse it, of course. Rename the copy to “Unlit – Transparent Colored RTT” (RTT being “Render To Texture” if it’s not obvious). Now open the new shader in your favourite text editor (I like Sublime Text!). First, change the name at the top to “Unlit/Transparent Coloured RTT”. Next, find the line with the text “ColorMask RGB”, and delete it. That’s all there is to it! “ColorMask RGB” tells the renderer to write only RGB values and ignore the alpha channel, and by deleting it the shader will default to writing all the info we need.

Save the shader, and find your NGUI atlas in your project view inside Unity. Specifically, you want the material that it’s using, which is always stored alongside the atlas object and texture with the same name. Edit it, and change the shader to the one we just created. Now, head back to your rendering plane under the CameraRight object, and change the shader on the render texture material you created to be “Unlit/Transparent Colored”. There you go – your widgets should be rendering just how you expect them.

If you don’t see your UI, it’s time to troubleshoot!

- Is the NGUI camera pointing to a valid render texture?

- Is the render plane oriented correctly? Neither the plane nor the material is double-sided so it will be invisible from the wrong viewing angle.

- Is the render plane using the correct material?

- Is the material using the correct shader?

- Is everything on your UI in the correct layer, and the camera culling mask is set up to include that layer?

As you can see there are a few potential points of failure so make sure to double-check everything as you go along.

Dealing With Clipping

One extra feature you might want is the ability for the UI plane to render over the environment, even if it would ordinarily be obscured by it. If you can stand to set up another shader we can fix that too!

In the NGUI shader folder, again copy “Unlit – Transparent Colored.shader” and rename the copy to “Unlit – Transparent Colored NoClip”. Edit the shader, change the name at the top to add the NoClip extension, and now look for the “Queue” line in the “Tags” group.. Change “Transparent” to “Overlay” – this tells the Unity render queue to render this object in the final group, after everything else in the world. Then find the “ZWrite Off” line, and right underneath that add “ZTest Always”. This means to always draw this material regardless of what else happens to be at that point onscreen.

Now change your render texture material (used on the plane object) to use this shader, and your UI will render on top of everything in the world. I suppose you might run into issues if you’re using fullscreen effects as they use the Overlay render queue too; I haven’t tried that out yet. Otherwise, you should have a nice-looking NGUI UI in your Rift!

Unity Planetoid Experiments, Part 2

In the first part of this article, I covered planetoid creation and navigation. Let’s press right on into how we navigate BETWEEN the planets!

Jetpackin’

I actually approached this project as first and foremost a jetpack “simulator” (though that’s a bit of a grand term). Even before the planetoids and multiple gravity sources, the first thing I implemented was my jetpack control method.

The basic idea is that the pack operates via thrust vectoring, with one or more moving nozzles providing lateral as well as vertical thrust. If the player leaves the directional controls alone, the nozzle(s) point downwards giving vertical lift. If they point the stick forwards, they rotate backwards to drive the user in the direction they expect. Of course, you lose lift at the same time, so it becomes a balance to find enough forward speed without dropping yourself out of the sky.

The system I went with is physics-based; the player object has a non-kinematic rigidbody, and the user’s inputs are turned into forces. The first step of that is to turn them into a nozzle orientation, which is nice and simple:

// The maximum amount in degrees the nozzle can rotate on each axis

const float kMaxThrustNozzleRotation = 80.0f;

// Nozzle rotation around the X axis is driven by the left stick Y axis

// (forward/back)

float xRot = controllerAxisLeftY * kMaxThrustNozzleRotation;

// Nozzle rotation around the Z axis is driven by the left stick X axis

// (left/right)

float zRot = controllerAxisLeftX * -kMaxThrustNozzleRotation;

// Build the rotation quaternion

Quaternion rot = Quaternion.Euler(xRot, 0.0f, zRot);

// Our thrust direction becomes the up vector rotated by the

// quaternion, transformed to the player object local space

Vector3 thrustDirection = transform.TransformDirection(rot * Vector3.up);

Then all we need to do is scale thrustDirection by our input value (I use the trigger so we can apply variable amounts of thrust), and apply the thrust as a force with rigidbody.AddForce(jetpackThrust) in the FixedUpdate function.

Orbits

The planets I described in the first article were static. That’s fine, but planets and moons usually orbit others, and it would be cool to have that working.

Luckily it’s simple to do. I just added a rigid body to my planetoids, set all drag to zero, set them up with a system that applies an initial force (for movement) and torque (for spin), and put them around another gravitational body. As long as you get the initial force close to correct, they’ll fall into a nice orbit.

Ah, but what will happen if something bumps into them? Well, even if their rigid body has an extremely high mass, if the other object’s is set to kinematic it will disturb them. The planetoid will probably deorbit, with no doubt tragic consequences for all involved.

The workaround for this is to create another object with which all collisions will take place. It will shadow the planet without actually being parented (as parenting it would impart the results of any collision back to its parent’s rigid body).

Take any collider off the original planet, give one to the shadow object instead, then every update set its transform position and rotation to be the same as its owner. The timing for this operation is important; I tried LateUpdate (collider will lag by a frame) and FixedUpdate (collider won’t update smoothly) first, but Update is the magic bullet which will give you the correct position and orientation.

The effect of this is that any object will now collide with your fake shell and not the planet itself, which will remain blissfully unaffected by the otherwise catastrophic results of innocent kinematic players landing on its surface.

But hang on – why is the player using a kinematic rigid body? Didn’t we just discuss a physics-based jetpack system?

Landing on Planetoids

Those of you paying attention will note that in the last article I cautioned against the use of a force-based movement system for our character when on the ground, and yet here I am using one for my jetpack. Before I get into why I’m doing that, let’s see why using forces for character movement is a bad idea when attaching to planets in the first place.

When landing on an orbiting planetoid, how do we keep our character attached to it as it moves through space? Well, the normal thing to do is to parent our character to the object it’s just landed on. Parenting means that our character’s transformation matrix will be transformed relative to the parent’s matrix and thus all of our movement becomes local to that parent object. In effect, we’re “stuck” to it.

The problem is this: parenting non-kinematic rigid bodies together is a no-no. In fact, you shouldn’t parent non-kinematic rigid bodies to any moving object. It might seem to work most of the time, but you’ll get odd effects when you least expect them; the parent’s transform updates don’t play nice with force-based movement.

So what do we do? We actually have a few options. We could use potentially use a joint, such as a FixedJoint, which is a method of attaching rigid bodies together. However this has some differences to the standard parent-child relationship, and wouldn’t work for our needs here. Another approach would be to go to a completely kinematic solution – replacing the force-based jetpack system with a method of updating the object transform manually, which would involve keeping track of my own accumulated thrust vectors, collisions and so on. However frankly this seemed like a lot of work, and I already had a movement system that felt great which I didn’t want to wreck. So I decided to switch my character’s rigid body from kinematic to non-kinematic depending on whether it was on the ground.

A kinematic rigid body is one which doesn’t respond to forces. If you set one up by checking the Is Kinematic box in the inspector, it’s expected that you control it manually by updating its transform. So what I do is when the player is jetpacking through space, his rigid body is a standard non-kinematic one which responds to gravity and jetpack thrust. As soon as I detect a ground landing though, the rigid body is switched to kinematic and my code changes to use the movement system described in the previous article. If the player applies thrust, or if gravity from another body begins to pull him in a new direction, we switch back to non-kinematic again to allow the forces to take control.

This works great and only has one real drawback – the bouncy, slightly untethered feeling of light gravity vanishes when walking on a low-gravity planetoid. That’s because gravity isn’t actually applied when the player character is on a surface, and we rely purely on the ground-snapping from the movement code to keep us attached. That may sound bad (and it’s definitely not ideal), but it’s not actually that big of a problem for my use case. If it becomes more important, I’ll look for a solution.

One other point about parenting objects – never parent an object to another with non-uniform scale. If your objects are rotated, this will introduce shear into the child object and you’ll get very odd results, usually manifesting as a sudden and gigantic increase in scale. Avoid at all costs!

Alright! So now we can navigate between orbiting planetoids. What’s next, I wonder?

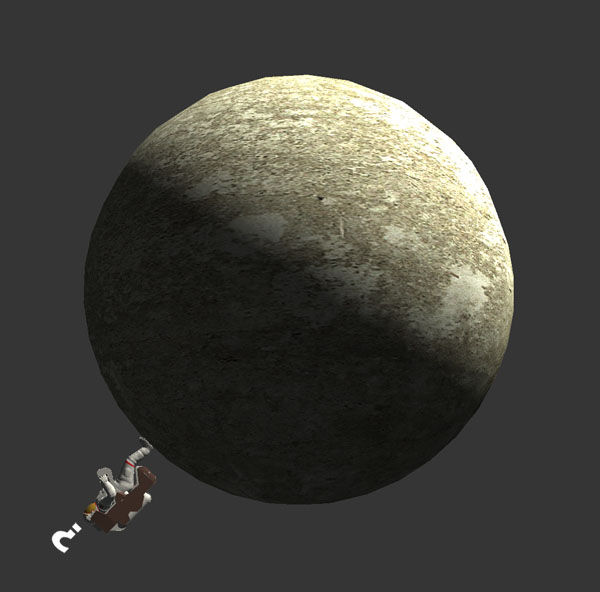

A probably very queasy astronaut goes for a ride in a busy solar system.

Unity Planetoid Experiments, Part 1

Recently I’ve been messing around in Unity with something intended for the Rift, though I don’t yet have my kit (and won’t for several months by the sounds of things). I’ve always been a fan of the innovative and beautiful Super Mario Galaxy, especially the planetoids and other structures that comprised its levels, each providing its own gravity. I wanted to try a first-person version of that concept, in a less fantastical setting. You can see an early version of what I came up with in this Youtube video. Along the way I found and overcame a few problems that I thought it was probably worth sharing the solutions for, so here’s a quick review.

GRAVITY

So the first thing to do is to replace the simple default PhysX-provided gravity source with something a lot more flexible. I wanted to mix my planetoids with a more traditional “flat” terrain, so created two types of gravity source – spheres and infinite planes. A spherical source will pull objects towards its specific position (usually the center of a sphere), whereas the plane sources pull objects to the surface of the plane. If the plane pulled objects towards its position, they would be pulled horizontally inwards to the center of the plane object which is obviously not what we want.

So for a spherical source, the gravity vector applied to a given object is just (object position - gravity source position). The gravity will naturally fall off over distance, so we’ll want the magnitude of that vector to calculate the gravity strength. For an infinite plane the gravity direction is always the local down vector (ie. -transform.up), and for distance we want the shortest distance to the plane, which you could also describe as the vertical component (in the gravity source’s local space) of the same vector. We can get this with the dot product of the vector from an object to the plane’s position, and the local up vector:

float distanceToPlane = Vector3.Dot(objectPos - planeTransform.position,

planeTransform.up);

Then all we need is a gravity manager object which is responsible for calculating the gravity vector at any given point. This is easily done by totaling all forces which act on that point (ie. where the magnitude of the gravity vector returned from a source is > 0) and then dividing the resulting vector by the number of active sources we found. To get an object to be affected by the gravity, add a rigid body to it, turn off “Use gravity” to disable the standard effect, and finally add a script to each object which has the following function:

void FixedUpdate()

{

Vector3 gravityVector = GravityManager.CalculateGravity(transform.position);

rigidbody.AddForce(gravityVector, ForceMode.Acceleration);

}

A couple of things to note there – one, we’re using FixedUpdate, because it occurs right after the physics have been calculated and at a nice predictable steady rate. Always add forces in FixedUpdate. And the second thing is to apply the force as an acceleration to get the right effect.

An interesting note about Super Mario Galaxy – it has all kinds of oddly-shaped landmasses, such as eggs, toruses, Mario’s face etc, which apply gravity to characters in a way which feels natural as you run over and around them. I suspect what they’re doing is using the inverted normal of a base layer of ground polygons that Mario is currently above as the gravity vector. The raycast to find the ground would ignore all other types of objects that would be in the way. This would be easy to add to this system and would be a nice experiment.

MOVEMENT AND ORIENTATION

When moving the player (or any character) around a planetoid, presumably you want it to orient to the surface on which it’s standing. You also want movement to be completely smooth and to feel the same whether you’re on the top or bottom of the sphere. Now I have to break some bad news to you: the CharacterController that Unity provides won’t work for this use case. It doesn’t reorient the capsule it uses – it’s always upright no matter what the player object’s orientation is. In addition it comes with certain assumptions that don’t fit our needs, such as a world-space maximum slope value.

My initial solution to this problem was to take advantage of the rigid body which was already attached to the player object, and use a movement system which pushed the player around with forces. This worked great but ultimately it proved unworkable as the planetoid code moved along to the obvious next step. I’ll keep you in suspense until the next article if you can’t guess why, but for now just take my word for it – a force-based system isn’t going to work for us.

So, we’re going to reinvent a large part of the CharacterController wheel here. First make sure the player object has a capsule collider as well as the rigid body; rigid bodies don’t have any physical presence in the world so you still need the collider. The rigid body needs Is Kinematic set to true – that means we’ll be updating the transform directly rather than using forces to move it. More on this in my next article!

We need to decide on a step height, which will control the maximum height the capsule can “snap” if it finds an obstacle or a drop in the path of movement. Mine is set to 0.5m. My cheap step height shortcut is to pretend the capsule is actually at its height + stepHeight when moving it – the obvious disadvantage here is that you need at least a clearance of stepHeight above the player to move, but that’s not a problem in my case.

To handle possible collisions, each frame we’re going to move the capsule in a number of iterations. I won’t go into too much more detail as this is a large topic that probably requires an article of its own, but the basic pseudocode algorithm is:

while (movementVector.sqrMagnitude > 0.0f)

{

Vector3 movementDirection = movementVector.normalized;

float movementDistance = movementVector.magnitude;

RaycastHit hitInfo;

if (Physics.CapsuleCast(capsuleTop, capsuleBottom,

capsuleRadius, movementDirection, out hitInfo, movementDistance))

{

// Hit something!

Move the capsule to this point (remembering to subtract the radius first,

as we've hit with our edge but the position is the capsule center).

Pick a new movementDirection (maybe a vector reflection or a direction

perpendicular to the normal of the face we've hit).

The length of the new movement vector is movementDistance minus the

distance we've just moved in this iteration.

}

else

{

// Reached our destination (transform.position + movementVector)

Cast a ray (or capsule) downwards from the destination to find the ground

height, using a maximum distance to avoid snapping when the ground is too

far away, eg. when walking off ledges.

Move the capsule to this location at the ground height (plus half the

capsule height to account for the capsule center being our position).

movementVector = Vector3.zero;

}

}

The reason we keep moving once we’ve hit something is that otherwise the character would just hit an obstacle and stick to it. It’s always nicer to slide along it.

That should take care of movement. The ground snapping required while walking along a curved surface is taken care of by the algorithm above. So now we just have orientation to worry about! This can be surprisingly tricky until you hit on the right approach, especially around the poles as you might encounter ugly rotational snapping issues. The key is one of Unity’s math functions; Quaternion.FromToRotation takes two rotations and returns you a quaternion which will transform one to the other. Don’t forget to apply that to your original rotation afterwards.

Quaternion desiredRotation = Quaternion.FromToRotation(transform.up,

-vGravity.normalized) * transform.rotation;

transform.rotation = Quaternion.Slerp(transform.rotation,

desiredRotation, maxGravityOrientationSpeed);

The above code finds the ideal rotation for the current gravity direction, then applies a slerp blend to make sure the transition is smooth.

OK, so now you should be able to set up a planet with spherical gravity and have a player character walk around it. In the next article, I’ll talk about extending the system to support moving and rotating planets, and moving the character between them. Thanks for reading!

ConsoleX: A Unity EditorWindow example

I was wondering recently why the Unity console window is so… spare. It lacks a lot of features you’d find in most modern logging implementations. Having some time on my hands at the moment, I thought I’d look into making a replacement. It’s maybe not the sexiest project, and I’m sure there are others out there (I haven’t looked) but it seemed like a good opportunity to dig into a deeper bit of editor scripting than I’ve done in the past.

My new console window has user-configurable channels, string filtering, Unity Debug.Log capturing and reporting, saving to a file and so on. As usual, pulling this off required a significant amount of time digging around with Google in forums, answers, random blogs etc. It might benefit someone to collect all of that in one place. So I won’t talk about feature design unless it overlaps with an interesting piece of Unity lore!

Initialization

The ConsoleX class (the X standing for Xtension or possibly Xtreme depending on your current caffeine levels) is derived from EditorWindow, and the script is placed in the Editor folder. Interesting note – it doesn’t have to be “Assets/Editor”, but any subfolder of Assets called Editor works too – Assets/MySubFolder/Editor, for instance.

The window is opened by the user from a menu option, so I have an Init function tied to a MenuItem attribute:

[MenuItem("Window/ConsoleX")]

static void Init()

{

EditorWindow.GetWindow(typeof(ConsoleX));

}

Next, the window needs to initialize a few things. The best place to do that turns out to be the OnEnable function, which is called after Init whenever the window is reinitialized. This happens more often than you’d expect – the most common case is when play mode is ended.

void OnEnable()

{

LoadResources();

if (channels == null)

{

channels = new List();

SetupChannels();

}

if (logs == null)

{

logs = new List();

}

...

Notice that I’m checking for null objects in that snippet – I’ll explain why in the next section.

Serialization

When working with editor scripting, proper serialization of your objects is critical. The lack of it is the reason why, after going into and out of play mode, your shiny, incredibly useful new EditorWindow all of a sudden clears its data and starts spewing errors everywhere.

The first part of fixing this problem is of course understanding what is actually happening. It took me longer than it should have to find this essential blog post on the subject by Tim Cooper, but luckily it’s very thorough and I had my objects serializing within minutes. One thing to bear in mind that isn’t called out in that post is that static variables aren’t serialized. Public variables are serialized automatically, protected/private fields need the [SerializeField] attribute, but static vars aren’t serialized at all. That’s because serialization works on instantiated objects; static fields are not instanced. Something to keep in mind for your editor class data design.

Serialization is the reason we check for nulls in the OnEnable function – data is reserialized back into the class before OnEnable is called, so those fields may in fact be initialized with valid data at that point.

GUI issues

Laying out your EditorWindow GUI is mostly straightforward, but as soon as you want to do something a bit off the beaten path it can be tricky to get the controls looking exactly like you want them to.

You’ll probably need to use most or all of the following classes:

GUIStyle– Styles can be supplied per control, and determine exactly how the control will look.GUI– Methods to add controls manually; that is, without any automatic placement.GUILayout– Methods to add controls which are automatically positioned, and specify how they’re sized.EditorGUI– Editor-focused version of the GUI class.EditorGUILayout– Editor-focused version of the GUILayout class. This is the GUI control class I used the most.EditorGUIUtility– Less frequently-used but still vital layout controls.EditorUtility– Utility class used for a lot of miscellaneous functionality.

These classes all interrelate somewhat and you need to use most of them together. You usually won’t mix layout with non-layout classes though; in other words you’ll probably use GUI and EditorGUI together, or GUILayout and EditorGUILayout instead.

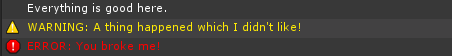

A good case study here would be my log lines themselves. I wanted to add icons before the text in some cases:

It turns out there are several ways to do that!

The first thing I tried was using an EditorGUILayout.LabelField, a function which has many different versions and has two different ways to achieve my goal: you can use a GUIContent object and populate it with both an image and text, or you can pass two labels into the function at one time, either GUIContent or plain strings.

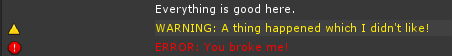

Using a single composite GUIContent object gave me a problem with long strings; the image part of the GUIContent would no longer be drawn when the text was longer than the width of the label. The second method of passing in two labels had several problems. First, you can only have one GUIStyle for both items. A pain, but in this case not a deal-breaker. Next, the gap between the first and second labels looks like this by default:

It took a LOT of searching before I found the solution to that: EditorGUIUtility has a function called LookLikeControls which allows the prefix label width to be set:

EditorGUIUtility.LookLikeControls(kIconColumnWidth);

The final problem was annoying: I’m using a ScrollArea through EditorGUILayout to hold the log messages, and for some reason using EditorGUILayout.LabelField didn’t give me a horizontal scrollbar for long strings. This can be fixed using CalcSize on the style to find the desired width of the label, and a GUILayout option to properly size it (GUILayout options can be passed into all control functions):

Vector2 textSize = labelStyle.CalcSize(textContent);

EditorGUILayout.LabelField(iconContent, textContent, labelStyle,

GUILayout.MinWidth(kIconColumnWidth + textSize.x));

So after figuring all of that out, I decided I wanted a separate GUIStyle for the icons after all, and threw most of it away! This is what I ended up with:

EditorGUIUtility.LookLikeControls(iconColumnWidth);

Rect labelRect = EditorGUILayout.BeginHorizontal();

EditorGUILayout.PrefixLabel(logs[i].logIcon, logStyle, iconStyle);

GUILayout.Label(logs[i].logText, logStyle);

EditorGUILayout.EndHorizontal();

GUILayout.Label automatically sizes the label correctly so there’s no need to manually calculate and specify the control width. And using the separate PrefixLabel call allows me to specify a unique style for the icons. Done!

Transferring data between the game and the editor

Getting data from the editor to the game is trivial – editor classes can access game classes directly. Going the other way is a little more tricky as the inverse is not true.

My first solution was to log my data into a static buffer provided by a game-side class, and use OnInspectorUpdate polling to check the buffer and pull anything new over into the ConsoleX log. This worked fine, but OnInspectorUpdate is called ten times a second and is therefore introducing unnecessary overhead.

My friend Jodon (who runs his own company Coding Jar, check him out if you need any contracting work done!) suggested using C#’s events instead of polling. This works just as well and is much more efficient. I still need a game-side class, but now I define a delegate and an event in it. The main EditorWindow ConsoleX class uses another event (EditorApplication.playmodeStateChanged) to detect when the user enters play mode, then adds its own handler function to the game-side’s event.

The game-side class, ConsoleXLog:

public class ConsoleXLog : MonoBehaviour

{

public delegate void ConsoleXEventHandler(ConsoleXLogEntry newLog);

public static event ConsoleXEventHandler ConsoleXLogAdded;

...

}

…and in the editor class ConsoleX:

void PlayStateChanged()

{

if (EditorApplication.isPlaying)

{

ConsoleXLog.ConsoleXLogAdded += LogAddedListener;

}

}

It seems to take a few frames before the event is setup. If logs come in during that time, helpfully the ConsoleXLogAdded event reports as being null, and I can check that and store the logs locally on the game side until the editor class has added its handler.

I’ve still got some things to talk about – editor resources, EditorPrefs, and more – but I’ll leave that for another post. Hope this helps someone, someday!

Designing Games for the Oculus Rift

If you’ve been following game news for the last few months, you’ve probably heard of the Oculus Rift. An affordable yet advanced stereoscopic head-mounted device, it’s causing something of a resurgence in the seemingly dormant field of virtual reality games.

I’ve been going back and forth on ordering a devkit for a while, and finally took the plunge last week. This is already a cause of much regret, as I find myself at the back of a queue thousands of developers long. If I’m lucky I might see a device this side of the autumn equinox.

Patience never was my strong suit

Self-pity aside, I’m now turning my thoughts towards what I’m going to do with the thing. I realized pretty quickly that despite my long experience in game development, including working on many first-person shooters dating back to the original PlayStation in the mid-90s, I’ve never actually thought about designing specifically for VR.

On the face of it, you might think that a conventional FPS was well-suited to a device like the Rift. After all, the viewpoint is the same, how much more to it could there be? Well, as it turns out, a lot!

The first issue is the player’s disconnect between their awareness of their own body and the avatar they’re controlling in the game. VR delivers a sense of presence, yet our current control interfaces break that immersion just as it’s getting started. We can’t yet move our avatar by walking or running, we need to move a control stick. We can’t swing our arms around to aim a weapon; again, we need a control stick. When the player looks to the right or left in a VR game, the inclination may be to rotate our elbows or shoulders to try to bring a gun around with our viewpoint. That’s similar to how many people tilt a controller left or right when navigating a corner in a driving game, and just as ineffective.

From this we can see that controls that complement the VR experience will be a thorny issue, at least with current input devices. TeamFortress 2’s Rift integration apparently comes with seven different control schemes offering different methods of aiming and moving. That sounds like a lot, but more choice can only be good as the industry begins to figure out these new problems.

Next up are problems of scale. Speed especially is unrealistic in most games – this PAR article points out that the Scout character in TeamFortress 2 runs at 40mph! That’s incredibly disorienting for a player and may well be a cause of some of the not-infrequent reports of nausea and motion sickness that a device like the Rift can produce.

That Penny Arcade article talks about (and in fact is predicated entirely upon) another surprising fact, one that I’ve seen reiterated many times by many different commentators. That’s the fact that the true joy from a VR game seems to come mainly from immersion and the sense of physical freedom it conveys rather than any combat or competitive element. It’s been compared to lucid dreaming; the wish-fulfillment of finding ourselves a superhero, or journeying to another world.

“I could have easily spent an hour just flying around from rooftop to rooftop in Hawken, without any care for the game’s intended purpose as a mech war simulator.” – The Verge – I played Hawken on the Oculus Rift and it made me a believer

“I didnt race right now, only sitting in AIs car watching and looking around, but i could do that for hours being amazed and not getting bored :)” – vittorio, rFactor 2 forum post

Perhaps this is simply a passing phase, and as we get more used to and comfortable with VR devices we’ll want to get back to the game mechanics we’ve been challenging ourselves with for the last 40 years. Or maybe this really is a sea change in what we want from our games. Either way this development is more exciting to me than almost anything else I’ve ever seen in this industry – and I’ve been doing this a long time!

So what am I thinking about for my first hobbyist VR project? I have a specific design in mind but I’m not letting the cat out of the bag that easily! I’ll go over some of the factors I’m considering though.

In keeping with the articles I reference above, I’m thinking that a game based around exploration and free movement with possibly only some small element of challenge is probably the best idea at this point in time. It’s also been pointed out in several pieces of Rift coverage that vehicles seem to provide a better sense of immersion than controlling a humanoid avatar – the act of sitting in a virtual car or cockpit seems to map much more closely to the probable sitting posture of the player and removes some of the body-disconnect I discussed earlier.

The perfect VR game? Or would it be too much to handle?

I like the idea, suggested by my friend Martin in this thought-provoking article on VR design, of having a reason for the player to look around, to force that immersion provided by low-latency head-tracking to be used to its fullest. Maybe we don’t need a HUD anymore, and can look at our own character or equipment to get all of the state information we need. Maybe the player needs to identify things in the world by hunting them out visually. And maybe we are no longer forced to make important events happen directly in front of the avatar.

And finally, I’m a coder (and closeted designer), not an environment artist. For my one-man side projects, an abstract world is going to be easiest to put together, Unity Asset Store notwithstanding. Style is something I’m not too worried about for now. I imagine a VR environment will be effective with cubes as long as it’s lit reasonably well, and is built with lots of depth and reference points to provide an appropriate sense of speed.

These are only my initial thoughts. As I get stuck into this I expect I’ll change my mind about some things. I’ve likely got a few months before my kit arrives so I have time for some experimentation. If I produce anything interesting I’ll keep you updated!